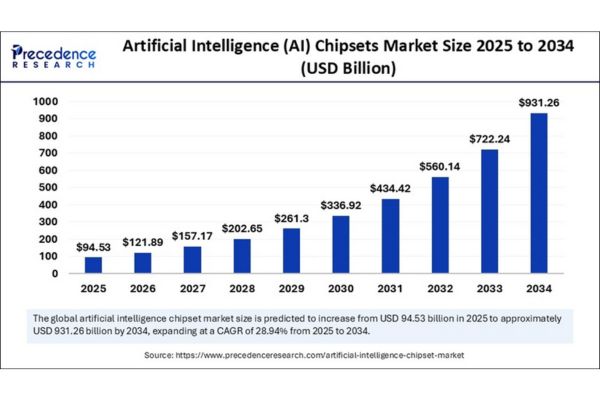

The artificial intelligence (AI) chipsets market is forecast to surge from USD 94.53 billion in 2025 to a staggering USD 931.26 billion by 2034, expanding at an impressive CAGR of 28.94% as enterprises race to power next-gen AI applications with efficient, high-performance processors.

Rapid advances in generative AI, machine learning, and autonomous systems are fuelling an unprecedented boom in the AI chipsets market, which is projected to grow at a robust CAGR of 28.94% from 2025 to 2034. The proliferation of real-time decision-making, smart devices, and industrial automation is driving massive investments in high-performance and energy-efficient chipsets.

Artificial Intelligence (AI) Chipsets Market Key Highlights

- In 2024, the global market size reached USD 73.32 billion and is set to cross USD 94.53 billion in 2025.

- North America captured the largest revenue share in 2024, thanks to industry giants and a rich innovation ecosystem.

- Asia Pacific is expected to record the fastest growth rate, led by China, Japan, and South Korea’s strategic investments.

- The GPU segment held about 36% market share in 2024, dominating due to its parallel processing power for deep learning.

- Cloud AI processing accounted for 52% market share in 2024, underlying the shift to scalable, flexible deployment.

- Top players include NVIDIA, AMD, Intel, Google, Amazon, Meta, TSMC, Samsung, Apple, and more.

Artificial Intelligence (AI) Chipsets Market Revenue

| Year | Global Market Size (USD Billion) | CAGR | Dominant Region | Fastest Growing Region |

| 2024 | 73.32 | 28.94% | North America | Asia Pacific |

| 2025 | 94.53 | 28.94% | North America | Asia Pacific |

| 2034 | 931.26 | 28.94% | North America | Asia Pacific |

Is AI Reshaping the Chipset Market?

Artificial intelligence is steering a technological revolution by enabling chipsets to support advanced applications in automotive, healthcare, consumer electronics, and data centers. AI hardware accelerates deep learning, computer vision, and natural language tasks, allowing companies to process large volumes of data with minimal latency.

AI’s role is most visible in the advancement of edge computing technologies, pushing processing closer to the data source for real-time inference and privacy benefits. Manufacturers are now investing in ultra-fine node designs and application-specific chip architectures (ASICs, TPUs) to target mission-critical sectors, from autonomous vehicles to Industry 4.0 robotics.

What Drives Explosive Market Growth?

The surge in AI-powered applications across verticals like autonomous vehicles, smart healthcare, finance, and logistics is intensifying demand for chips capable of handling data-intensive tasks with unparalleled speed and efficiency. Venture capital and government incentives are catalyzing capacity expansion, while innovation from giants like NVIDIA, Intel, and Google sets new processing standards.

Are AI Chipsets the Next Opportunity Frontier?

What Trends Will Shape the Industry?

- The rise of Edge AI is creating demand for low-power, high-efficiency chips enabling smart cities and autonomous machines will this disrupt legacy architectures?

- Rapid adoption of ultra-fine node semiconductor technologies (<7nm) is improving energy efficiency, performance, and scalability can manufacturers keep up with the pace of innovation?

- Integration of AI in consumer electronics and vehicles are we approaching a world where every device thinks for itself?

Regional Analysis

North America: Epicenter of AI Innovation

North America captured the largest market share in 2024, primarily due to its unmatched focus on AI research and a robust innovation ecosystem. Leading enterprises like NVIDIA, Intel, Google, Meta, Tesla, and OpenAI have established North America as the main hub for semiconductor and cloud-based AI solutions.

The passage of the CHIPS and Science Act by the U.S. government has dramatically boosted domestic semiconductor manufacturing and research funding, further strengthening the region’s leadership position. Increased demand from sectors such as defense, aerospace, and automotive has prompted major purchases of AI chipsets for model training and edge applications.

Asia Pacific: Growth Driven by Policies and Tech Investment

Asia Pacific is projected to be the fastest-growing region, powered by heavy investments from governments and the private sector particularly in China, Japan, and South Korea. National semiconductor policies and a push towards industrial automation have resulted in dynamic growth across the region.

Strategic investments in AI chipset designs and advanced packaging technologies are enabling applications in autonomous manufacturing, smart cities, and edge computing.

Europe, Latin America, Middle East & Africa: Emerging Adoption

In Europe, Latin America, and the Middle East & Africa, AI chipset adoption is rising due to expanding regulatory initiatives, smart city investments, and sovereign projects aimed at technological independence. European and Middle Eastern governments have encouraged the development of domestic semiconductor capabilities, while Latin America is investing in urban digitization, fueling demand for AI chipsets in a variety of sectors.

Segmentation Overview

Chipset Type: GPUs & ASICs Dominate

The market was dominated by GPUs, which held approximately 36% share in 2024, due to their unrivaled parallel processing capabilities for deep learning, NLP, and computer vision tasks.

ASICs (application-specific integrated circuits) are now growing at the fastest rate, especially in inference-heavy environments, supported by tech giants like Google (TPUs), Amazon (Inferentia), and Huawei.

Technology Node: Move to Ultra-Fine Nodes

7-10nm chips led the market with a 40% share in 2024, balancing high performance and energy efficiency for hyperscale cloud upgrades.

Rapid growth is expected for sub-7nm technologies (including 5nm and 3nm), offering increased transistor density and processing speed to enable complex model training and edge computing. Industry leaders such as NVIDIA, TSMC, Intel, Samsung, and Apple are accelerating production of these advanced chips.

Processing Type: Inference & Training Divide

Inference chipsets accounted for the largest share (~58% in 2024), valued for energy-efficient, low-latency AI responses in both cloud and edge environments. Tech companies like Meta and Amazon deploy tailored silicon for billions of daily queries.

Training chipsets are growing rapidly, spurred by investments in foundational and multimodal AI models required for large language models and sovereign AI clusters.

Deployment Mode: Dominance of Cloud AI

Cloud AI processing held about 52–63% market share in 2024, being the backbone for scalable, flexible AI workloads and extensive model deployment. All major cloud providers (AWS, Google Cloud, Microsoft Azure) continue to expand AI-powered data center infrastructure.

Edge AI processing is set for fastest gains, meeting the need for decentralized, real-time intelligence in autonomous vehicles, wearables, smart city infrastructure, and industrial robots.

On-premise AI chipsets are increasingly popular among organizations that prioritize data privacy, low latency, and regulatory compliance, particularly in finance, healthcare, government, and biotech sectors.

End-Use: Consumer Electronics & Automotive Lead

Consumer electronics held the leading revenue share in 2024, with surging demand for AI-enabled smartphones, smart speakers, tablets, and wearables featuring custom neural processing units (NPUs) for voice, image, and context-aware functionality.

Automotive is the fastest-growing end-use sector, as OEMs and suppliers invest in specialized chipsets for self-driving and electric vehicles (EVs), with technologies like adaptive cruise and AI-powered safety becoming standard. OEMs such as Tesla, BMW, and others are active adopters of new AI hardware for enhanced driver assistance systems.

Recent Breakthroughs and Leading Companies

Innovations in 2024–2025 include the launch of Intel’s Xeon 6 CPUs and Gaudi 3 AI accelerator chips, aimed at advancing data center efficiency and competing strongly with AMD and NVIDIA in the AI sector. NVIDIA’s H100 Tensor Core GPUs have been pivotal in large model training, while major cloud providers have rapidly expanded GPU and ASIC deployment.

Key Companies

- NVIDIA

- Intel

- AMD

- TSMC

- Samsung

- Amazon

- Meta

- Apple

- Huawei

Challenges & Cost Pressures

- Supply Chain Disruptions and ongoing geopolitical tensions—particularly between the US and China continue to create uncertainty in raw material access and fabrication capabilities.

- High Development Costs: The advanced R&D, fabrication, and IP investments required to develop bleeding-edge chipsets can be prohibitive for new entrants and smaller firms.

- Pricing Pressure: With demand surging, the market faces upward pricing trends for leading-edge chips, potentially impacting access for emerging markets and some end-users.

Case Study: Automotive AI Chipset Integration

Automotive industry leaders like Tesla and BMW dramatically increased their investment in automotive-grade AI chipsets in 2024, catalyzed by new self-driving and advanced driver-assistance systems. Custom chips tailored for autonomous decision-making, sensor fusion, and in-vehicle computer vision have become central to next-generation vehicles, underlining the transformative power of AI hardware in real-world applications